Google is all about “Natural Language Processing,” or NLP for short. It’s a scary-sounding phrase for SEOs, but it doesn’t have to be. The really good news is after you have grasped the main concepts of NLP, it becomes quite easy to develop NLP strategies that will boost your SEO. What’s more, these strategies will be more likely to stand the test of time than old-school approaches.

This post will cover:

- Some disambiguation

- A quick non-technical view of what an NLP algorithm does

- Salience in NLP

- InLinks strategies for using NLP in SEO

NLP Disambiguation

In this context, NLP stands for Natural Language Processing and NOT Neuro-linguistic Programming. This latter concept can confuse people, as it relates to a pseudo-scientific area of psychology that was developed in the 1970s and 80s and has not stood up well to scientific scrutiny. I assure you that the NLP I am discussing here is very real and very useful (for SEOs). There are also several other uses of the phrase “NLP”, but I would like SEOs to distance themselves from the psychological meaning!

What is an NLP, and what does an NLP Algorithm do?

NLP looks at any body of text (it could be anything from a Tweet to a book) and tries to break it into concepts a machine can understand. This usually means breaking the text up into salient phrases, topics, or entities and also defining relationships between these topics. Over the years, what constitutes a “phrase, topic, or entity” has morphed and developed. Old School SEOs will have heard the phrase “n-grams”, which Google used to work out the difference between initially, say, “Paris,” “Hilton,” and “Paris Hilton” (even though “Paris Hilton” still describes at least two unique topics). Then ideas like “Word2Vec helped to disambiguate “Paris Hilton,” the hotel, from “Paris Hilton,” the celebrity, by understanding that words like “hotel” and “France” are semantically close to one object but not the other.

In more modern ideas, NLP algorithms try to break sentences, phrases, or whole documents down into Knowledge Graph items. This is not an exact science, but machines are now pretty good at it. However, there is a big gap between an NLP algorithm that extracts “Named Entities” (such as famous personalities, cities, and things that typically start with a capital letter) and an algorithm that is able to identify and extract wider topics, such as “walking” or “love.” This is an important distinction for SEOs, because Google’s NLP API, for example, is very strong at reporting the former but much weaker at understanding the latter, according to ongoing research by InLinks, which shows that their APIs only report 15% of the topics that InLinks sees in the same page of text.

It is unlikely that Google does not understand generic topics. It is simply that these topics are not what Google would call important enough to show in their API output. But given that these topics all have Wikipedia articles, for the SEO, seeing these topics at a more granular level is gold, as we will show later.

So – an NLP takes text… sentence by sentence… and turns it into a connection of entities. Take the sentence:

“Tomorrow, I will fly to Austin, Texas, to speak at Pubcon.” The entities might be:

- https://en.wikipedia.org/wiki/Tomorrow_(time)

- Dixon Jones: https://g.co/kgs/ewQcPU

- https://en.wikipedia.org/wiki/Flight

- https://en.wikipedia.org/wiki/Austin,_Texas

- https://en.wikipedia.org/wiki/Public_speaking

- Pubcon: https://g.co/kgs/jJodTf

Google’s API would almost certainly identify “Austin, Texas” as a named entity. It would also be unlikely to identify “tomorrow” or “flight”, even though these are well-defined topics with their own Wikipedia page. Furthermore, entities like “Dixon Jones” and “Pubcon” do NOT have Wikipedia pages, but as you can see from the knowledge graph links (g.co), Google obviously has a pretty good understanding of these topics and has entities.

An NLP algorithm may also need more information than just the text to be able to make the correct associations with ideas. In this sentence, the word “I” only relates to Dixon Jones if you have context. It might be other sentences on the page or the author bio, for example.

So… Entities and Topics are, in some regards, interchangeable. But annoyingly, not always. An “entity” is a topic with a record in a knowledge graph, and a topic is… well… a topic. The topic does not necessarily have a record in a knowledge graph. But it might have a record in SOME knowledge graphs. Wikipedia is a knowledge graph, but there are at least two entities in Google’s knowledge graph in the example above without Wikipedia URLs. In fact, if you type “define flight” into Google, they put a very short definition (from Wikipedia) with a share link: https://g.co/kgs/VqHL5v but this link only goes to a search result for “flight,” not a knowledge panel. But it still has a Google Knowledge Graph ID: /m/01515 as you can see here.

The Importance of Salience in NLP (and SEO)

With the simple 11-word sentence used above having at least six entities, imagine how many entities are in a 2,000-word article. This brings us to the question of “Salience.” A major part of an NLP algorithm is not deciding whether a topic exists on a web page but whether the topic is SALIENT to that web page. Salience is a word that means relevant & important.

One piece of research by Google on Salience gives us a very strong signal about how Google uses NLPs and how it might go about categorizing a topic as “salient.” This paper was probably written around 2010 (certainly on or after 2009) and describes a model that creates a binary decision as to whether a topic on a page is relevant. But we know from a later study by inlinks using Google’s then-public version of their NLP API that they later used a variable called “ResultScore” rather than a binary measure of salience.

So this suggests that the salience of “entities” on a page is somewhat fluid.

This – then – gives us the role of the SEO in optimizing for NLP. It is the task of the SEO to help clarify (where they can) what topics are important in the article so that any NLP algorithm can extract the topics in a way that is clear. NLP SEO is about disambiguation, not about optimization.

I have many metaphors in presentations that help describe this, but today I will use the metaphor of Maps. Think about: A Globe like the one you had in the classroom at school; Google Maps; Ordnance Survey Maps; and Apple Maps.

All of these will show you where things are on a map. The globe, however, will be better at showing countries. The Ordnance Survey map (A mapping organisation in the UK) will show you a phone box location in the Yorkshire Dales much better. But all of them will be able to point you in the right direction if you plan to travel from Edinburgh to London. They all just do so with a different granularity.

So it is with NLP SEO… you need to decide on the direction of travel for your content and the content’s granularity. In other words, if you talk about “flying to Austin, Texas,” you really do NOT need a paragraph on “flying,” but you MIGHT want a whole page on Austin (but not Texas). It is not clear cut, and nothing ever is in SEO, but to YOU and YOUR AUDIENCE, it SHOULD be clear cut.

How InLinks leverages NLP for SEO

InLinks is a bit more like the Ordnance Survey map than Google Maps when it comes to granularity, as it tries to extract EVERY entity from a piece of text, not just the “salient” ones. However, salience is inferred by the number of times an entity is seen on a page, and further weighting can be given to whether it appears in headings, the page title, or the first paragraph, for example. These are all very similar to the weightings that Google and other researchers suggest, but by being more aggressive in listing all the entities – salient or otherwise -, we can allow the SEO to do their magic – which is to make the most important topics more obvious to a search engine. This in turn, clarifies the content on the page which, in turn, should mean it is returned more accurately, when the entities Google finds in the user search query match the entities salient to the article you have on the topic.

Inlinks does this in a number of ways.

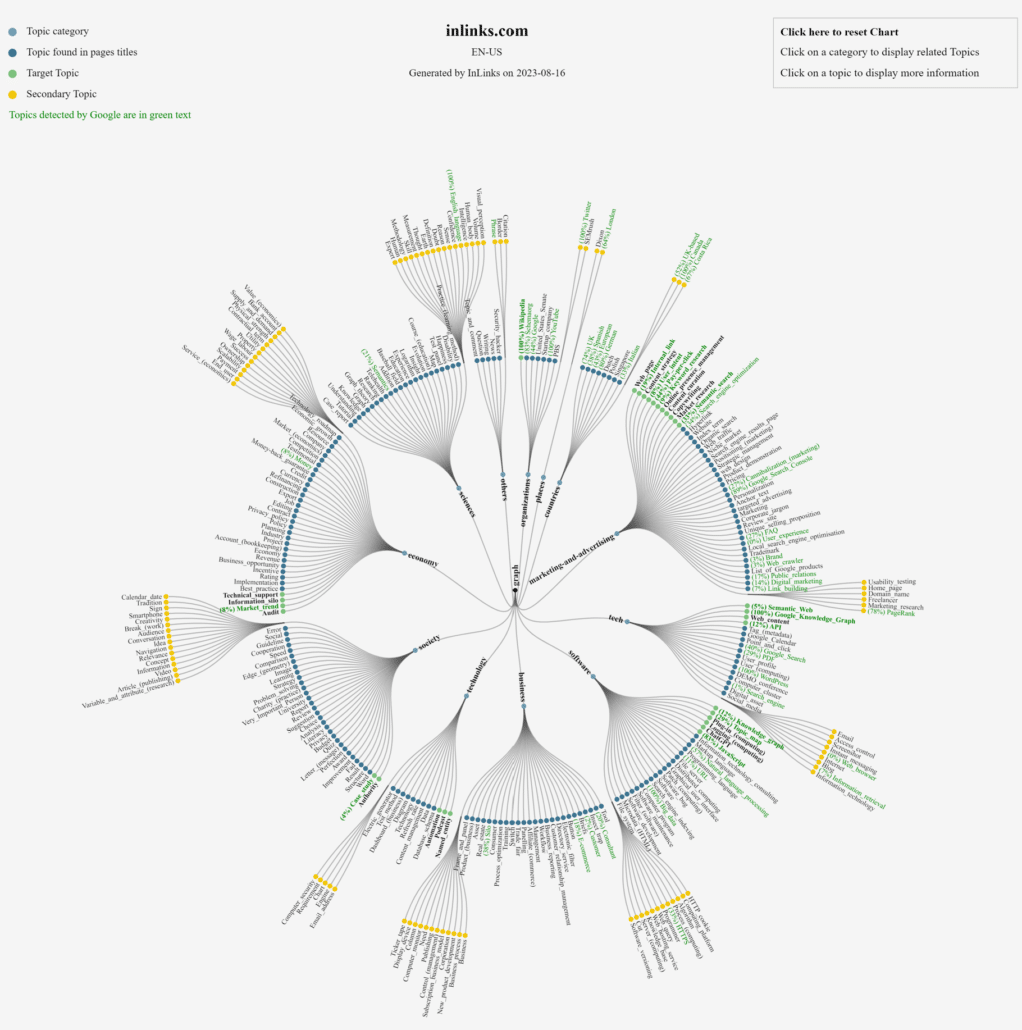

Using NLP to create a site topic map and to see gaps in Google’s NLP API

When you start a project on InLinks, every page that you add to the project is analyzed with our NLP. It is also analyzed by Google’s NLP API. The result is a comprehensive Topic Map of the site. This can be displayed in both tabular form and in a Topic Wheel. The tabular format is helpful in seeing what pages talk about what topics. But in the topic Wheel, we also show you what percentage of the time Google reports the salience of an entity. So – if we see an entity on three pages and the entity is shown in green with a (33%) beside it, then Google’s NLP API reported the entity as salient in only 1 of those three instances. In itself, this is not a definitive path to action because Google might never report some entities through this API, but we can use this information to help identify underperforming pages. Other tools in the InLinks suite build on this and give great insights and actionable ideas, as described below.

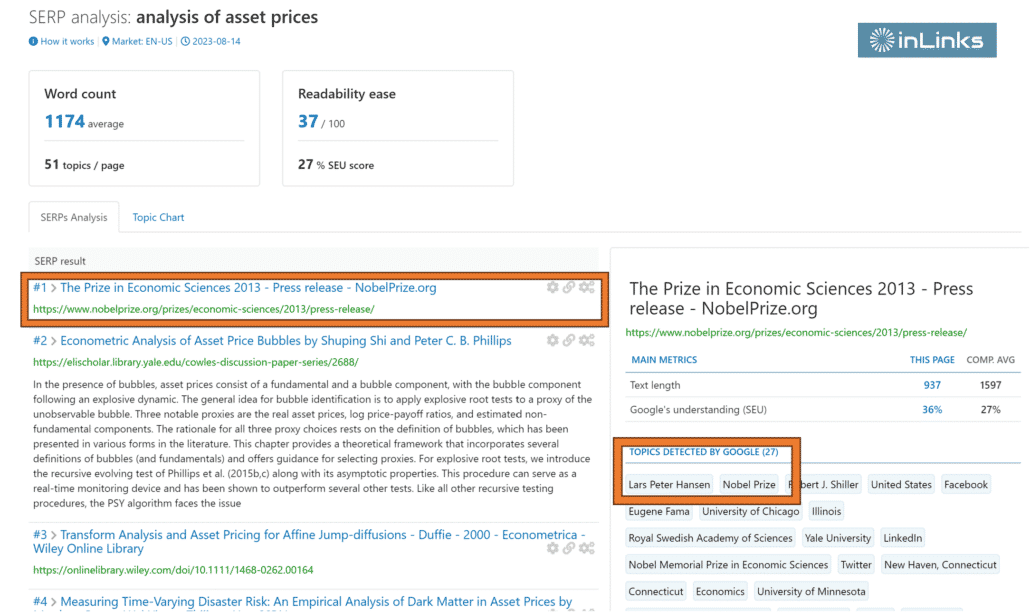

Find the correct topics to talk about by analyzing a trusted seed set with their own NLP algorithm.

When you “Create a brief” using InLinks, we generally take the top 10 pages that rank for any given search phrase and treat these as a “trusted seed set.” The idea is that if these are the 10 “best” pages to answer a search term, then they must be talking about the most “salient” topics. By counting the number of times each page uses each topic and by using weightings like H tags and titles, we can see the topics that stand out as important. We then use this to generate a content plan for your writer (or, these days, the AI assistant if you insist!).

But if you are a VERY wise SEO, you will know that taking the top 10 results from Google may not really be the BEST definition of a “Trusted seed set.” Google might have Youtube URLs in there… or Amazon pages. In fact, you may have a much more granular knowledge of your subject than Google does. So we give you the opportunity to modify the seed list. If I were really trying to be the best in my niche, I probably WOULD modify the list because being the best is about much more than being at the top of Google! Let me elaborate.

Nobel Prize winners are quite clearly amongst the most authoritative voices in the fields that they win Nobel Prizes for/ but their own writings RARELY appear in the top 10 Google search results. So – if you want to create the perfect content to rank for “the Analysis of Asset Prices”, you will get ten fine results on Google’s home page… but probably not direct insights from Lars Peter Hansen, who shared a Nobel Prize for this EXACT thing in 2013.

It is not that Google does not know this… look at the top result! But the result is about the Nobel Prize, not the research itself. This then implies that to get to the top of the SERPs; you should talk lots about Nobel Prizes and Lars Peter Hansen. That may get you ranking… but this result is NOT the expert result for the term “analysis of asset prices.” You can do better!

One thing you COULD do is swap out the Nobel Prize link (by clicking on the cog icon) and replace it with the text from THIS article/url. Unfortunately, this is a PDF (as are most of the articles on Lars Peter Hansen’s site), so you will first have to extract the text in this instance, but it is there for all to see – and to analyze.

Improving the choice of input URLs may well give you an edge… not just over other SEOs, but over other InLinks users. Doing so will use up a few more credits (one per URL you replace) but hey – you get the credits back next month!

Content Planning to write about the best topics for your SEO

Knowing what to write AFTER you have found the perfect search term is one thing. Knowing what to write about NEXT is something very different, and our NLP strategy comes in here as well. Our approach all stems from the Topic Map that we create when analyzing a website. This is generated using our NLP algorithm and defines the topics relevant to the site content. Our Content Planner tool then uses THIS to generate “Google Suggestions” in real time whilst you are working on a project. In other words, we use your browser to enter all the topics relevant to the project into Google Suggest to see what suggestions Google comes up with for the searcher. The NLP algorithm then runs over Google’s suggestions to see whether they will likely be relevant to the existing site’s content. If not, the suggestion is discarded. If it is, it is listed as a possible “content candidate.”

From there, it is a simple case of the InLinks user going through the suggestions, which are conveniently grouped by topic, and adding the best ones into a “Content Queue.” This is an easy place for you to maintain and review your content plan, and you can create content briefs directly from the queue as well.

In Summary

NLP is all about Natural Language Processing (not Psychology). You can use NLP algorithms to break the content down into underlying topics and store them in knowledge graphs as “entities” in these semi-structured databases. This is done by parsing the text and deciding what the topics are and whether they are salient. Saliency is a term used to signify relevance in the context of a page.

InLinks uses NLPs for SEO in several ways. InLilnks also uses NLP to generate Topic Maps of websites and to find topic gaps. It also uses NLP analysis when optimizing or creating new content for SEO by creating topic maps for the “best of breed” pages around any given search term and using this to generate a content plan for either a human writer or the AI assistant (or a combination of both) to create something optimal that is more than the sum of its parts.

InLins paid subscriptions start from $49 and are based on the number of pages added to a project. Sign up for yours today.

Thanks for reading. Thoughts welcome. Add a comment.

Leave a Reply

Want to join the discussion?Feel free to contribute!